Incidents Are Complex. Your Response Shouldn't Be.

Transparent AI agents that investigate, diagnose, and resolve — every step visible, editable, and reproducible.

Watch an Incident Unfold

A production pod is crash-looping. Here's what DagKnows does — step by step.

CrashLoopBackOff on production pod

PagerDuty fires — user-facing service is down

AI builds a causal DAG — not a linear checklist

Three hypotheses tested in parallel

-

Resource Exhaustion

- Memory exceeds limits within bounds

- CPU throttled normal usage

-

Application Crash

-

Exit code 1 — runtime error

-

Missing environment variable

- ConfigMap updated 23 min ago root cause

- Upstream dependency timeout all reachable

-

Missing environment variable

- Liveness probe failing probe OK

-

Exit code 1 — runtime error

-

Infrastructure Issue

- Node pressure or eviction node healthy

- Image pull failure image exists

Structured RCA posted to Slack

Evidence chain + rollback recommendation

Every command, every decision — fully visible and replayable.

Transparent & Reproducible, Always

See the exact code, commands, and reasoning the AI uses. Edit any step. Same inputs, same workflow, every time. No black boxes.

-

Resource Exhaustion

-

Check memory limits

within bounds

metrics = k8s.get_pod_metrics("payment-svc", ns) exceeded = metrics.memory_mb > limits.memory_mb -

Check CPU throttling

normal

stats = k8s.top_pod("payment-svc", ns) throttled = stats.cpu_percent > 90

-

Check memory limits

within bounds

-

Application Error

-

Container exit code

pod = k8s.read_namespaced_pod("payment-svc", ns) state = pod.status.container_statuses[0].last_state exit_code = state.terminated.exit_code # exit_code = 1-

Verify env variables

root cause

cm = k8s.read_config_map("payment-svc-config", ns) diff = compare_versions(cm, previous_version) # KEY_NAME removed in commit a3f8c1d (23 min ago)

-

Verify env variables

root cause

-

Liveness probe config

probe OK

probe = k8s.get_liveness_probe("payment-svc", ns) failures = k8s.get_event_count(pod, "Unhealthy")

-

Container exit code

-

Infrastructure Issue

-

Node health

healthy

node = k8s.read_node(node_name) pressure = any( c.type == "MemoryPressure" for c in node.status.conditions )

-

Node health

healthy

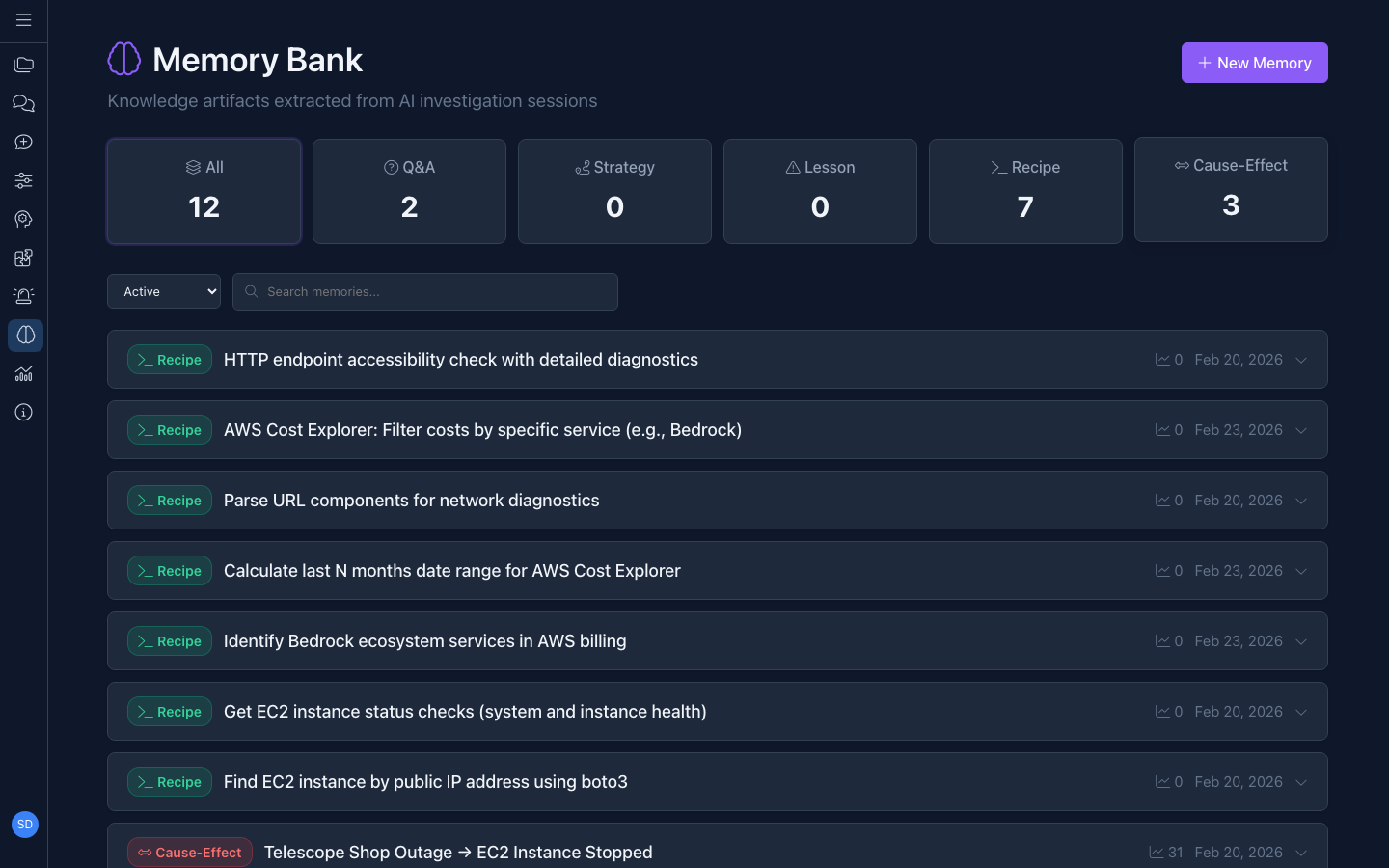

And every investigation makes the next one faster. DagKnows remembers.

Memory That Gets Smarter Over Time

Every incident builds your operational memory. Successful investigations auto-promote to reusable playbooks. Known failure patterns match to proven resolutions. When people leave, the knowledge stays.

100+ Built-In AI Agents

Best practices for AWS, Kubernetes, Grafana, Terraform, and more — injected into every generated tool. Create your own AI agents effortlessly.

Not just for troubleshooting. Use DagKnows for routine tasks, cost analysis, and understanding your systems.

Beyond Troubleshooting

The same AI-driven approach works for cost optimization, compliance checks, capacity planning, and everyday operational queries.

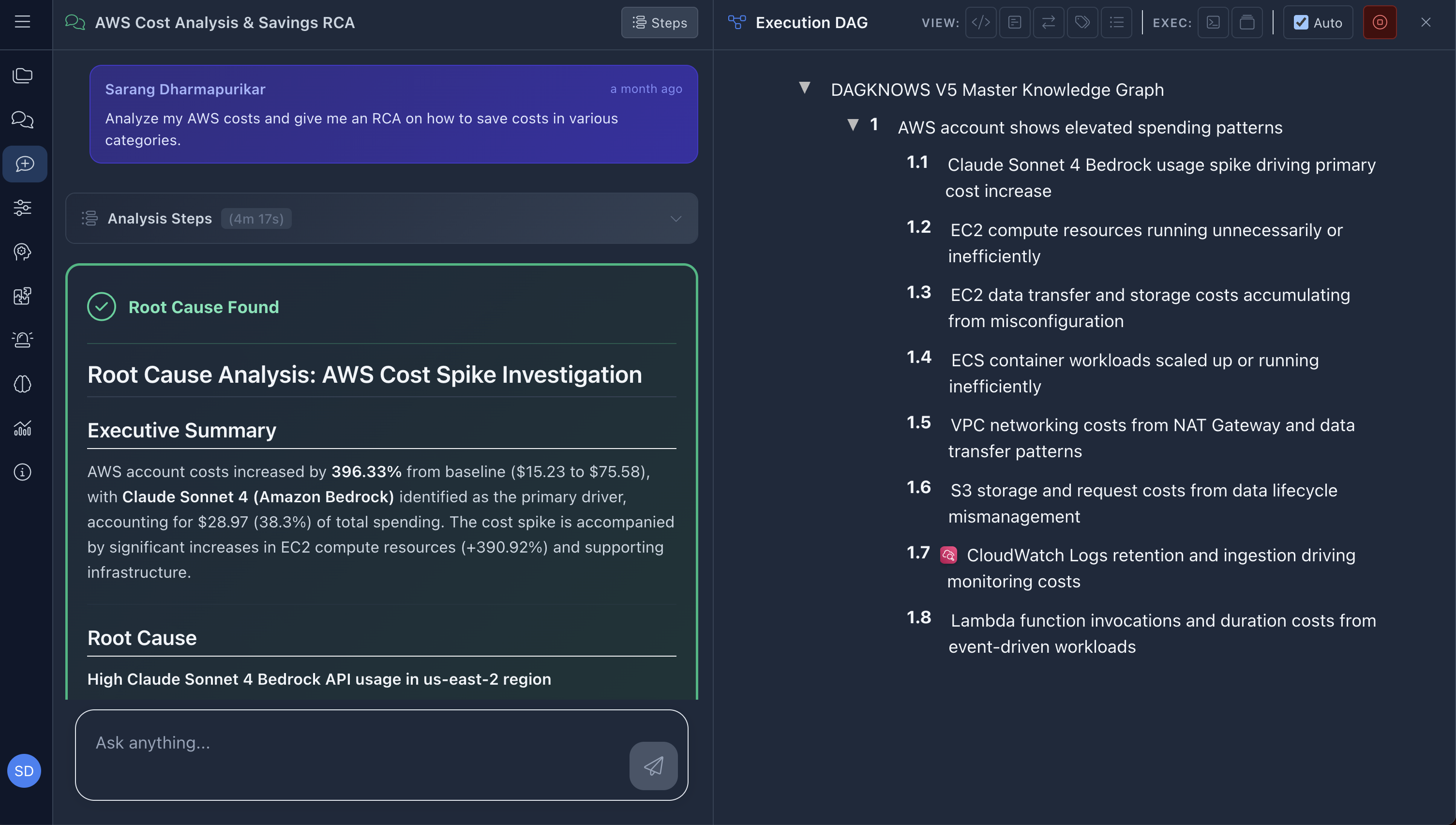

Cloud Cost Spike (AWS)

Prompt: "Analyze my AWS costs and give me an RCA on how to save costs in various categories."

Ready for your environment? DagKnows deploys wherever your data lives.

Deploy Your Way

SaaS or on-prem. Same full-featured platform either way.

websocket

execution

winrm

APIs

on-prem or cloud

Various applications

websocket

execution

winrm

APIs

on-prem or cloud

Various applications

Enterprise Security & Governance

Compliance-ready from day one.

RBAC & Workspaces

Role-based access with workspace isolation and per-task permissions.

SSO Integration

Google, Okta, GitHub, LDAP/AD. Auto-create on first login.

Approval Gates

State-changing actions require explicit human sign-off.

Full Audit Trails

Every action logged. Who, what, when, why. Exportable.

API Access Tokens

Scoped JWT tokens for programmatic access and CI/CD.

Credential Vault

Secure credential storage. Never exposed in logs.

Getting Started Is Simple

Connect

Point alerts to DagKnows, deploy the proxy. No firewall changes.

Build

Import runbooks or let AI generate them from incidents.

Respond

Start deterministic. Graduate to AI at your own pace.

See It In Action

Get a personalized walkthrough with your team's real-world scenarios.

Request a Demo